Proper gamma correction is probably the easiest, most inexpensive, and most widely applicable technique for improving image quality in real-time applications.

In this post, we are going describe what gamma and gamma correction are, why they are important, most common problems, and how to solve them.

What is Gamma?

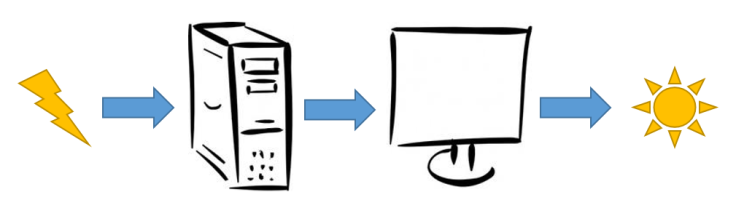

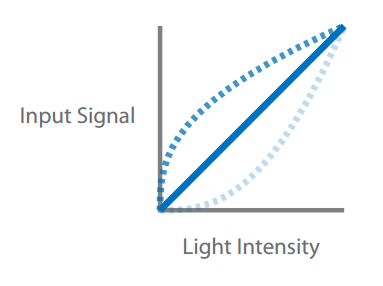

To display images on a monitor screen, an input voltage is applied.

This voltage outputs as light intensity on the screen.

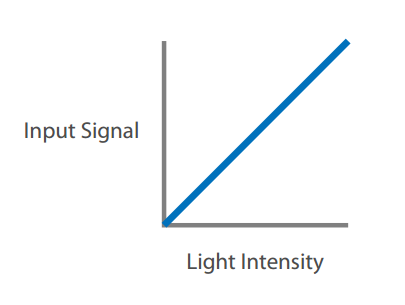

In a perfect world, the input would equal the output linearly.

but CRTs do not behave linearly in their conversion of voltages into light intensities. And LCDs, although they do not inherently have this property, are usually constructed to mimic the response of CRTs.

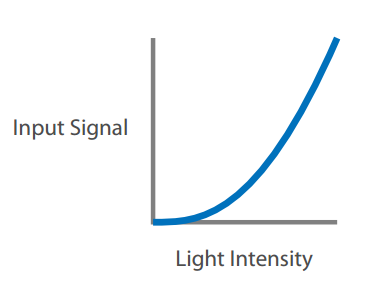

A monitor’s response is closer to an exponential curve, and that exponent is called Gamma.

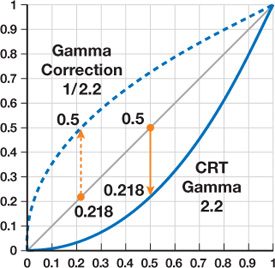

Gamma is different for every individual display device, but typically it is in the range of 2.0 to 2.4. Regardless of the exponent applied, the values of black (zero) and white (one) will always be the same. It’s the intermediate, mid-tone values that will be corrected or distorted.

How color is affected?

This is a linear ramp (linear because there are 10 same steps). It is easy for the human being to distinguish each step.

This is a Gamma curve of 2.2 applied to the above image. It dramatically darkens parts of the image. Adjacent darks is almost negligible when compared to the difference between the adjacent whites.

This is an Inverse Gamma of 2.2 applied to the first image. It dramatically lightens the image. You will notice the steps between adjacent dark colors are huge and the difference between adjacent light colors is relatively small.

In both cases, Black is still Black, and White is still White, but the colors between them are shifted. In conclusion, when you apply a 2.2 Gamma curve to an image it will dramatically darken the image. If you apply an inverse 2.2 Gamma curve to an image, it will dramatically lighten the image. And finally, if you apply an inverse 2.2 Gamma curve to a 2.2 Gamma image, you will get the source image.

The human eye sees increasing intensities of light in a manner that resembles the inverse gamma curve. Small differences in dark colors are significant and small differences in light colors are hard to see.

A typical gamma of 2.2 means that a pixel at 50 percent intensity emits less than a quarter of the light as a pixel at 100 percent intensity—not half, as you would expect. For example, if you have the RGB color (0.5, 1.0, 0.5), you will expect to see the following color

but actually, by applying the nonlinear scale that monitors employ, you will see the following color

What is Gamma Correction?

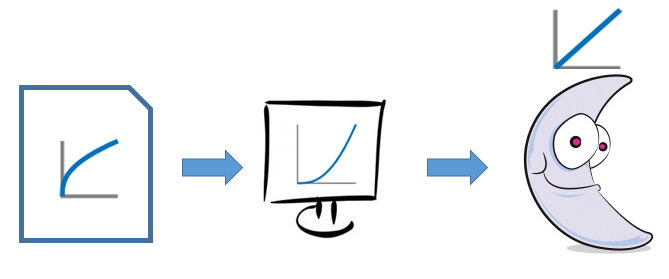

Gamma correction is the practice of applying the inverse of the monitor transformation to the image pixels before they’re displayed.

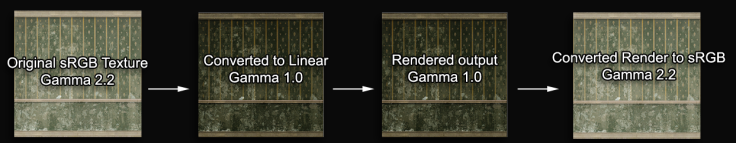

That is, if we raise pixel values to the power 1/gamma before the display, then the display implicitly raising to the power gamma will exactly cancel it out, resulting, overall, in a linear response. Here is an example where gamma is 2.2

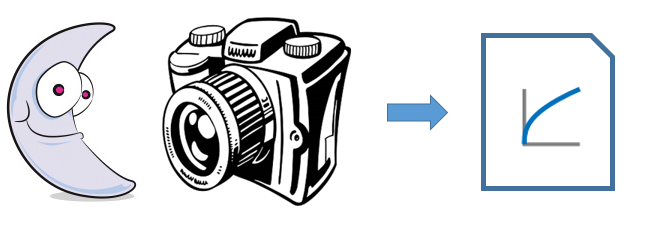

What happens with image files?

Suppose you take a photo with your camera. Each pixel will have an RGB representation. Instead of storing raw pixels directly in the file, 1/gamma is applied to each RGB channel.

To make your monitor more vivid and make paint programs work the way you anticipate, the manufacturers apply an sRGB correction (pretty similar to a gamma 2.2 curve) to your colors.

What is sRGB?

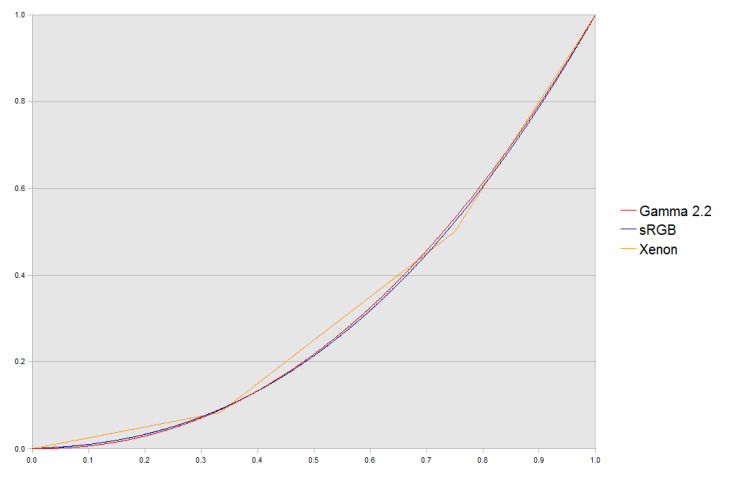

It is a standard RGB color space created by HP and Microsoft to be used on monitors, printers, and the Internet. sRGB is a slight tweaking of the simple gamma 2.2 curve.

Much software is now designed with the assumption that an 8-bit-per-channel image placed unchanged onto an 8-bit-per-channel display will appear much as the sRGB specification recommends. LCDs, digital cameras, printers, and scanners all follow the sRGB standard. Devices that do not naturally follow sRGB (such as older CRT monitors) include compensating circuitry or software so that, in the end, they also obey this standard.

sRGB has been mandatory since DirectX10 in 2008, and PS4 and Xbox One have the same high-quality sRGB support as their PC counterparts.

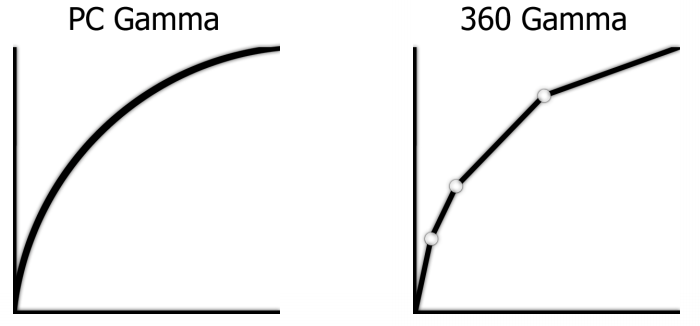

PS3 does sRGB conversion before alpha-blending. And the Xbox360 has a really terrible approximation of sRGB.

When should I pay attention to gamma correction?

If you simply use image files to be displayed on the screen, you do not need to worry about gamma correction and all that stuff. As we explained, monitors are not linear, and to solve that problem, most image files are gamma-corrected, and they will probably look acceptable on most monitors. But then, if I am a graphics developer, when should I worry about it?

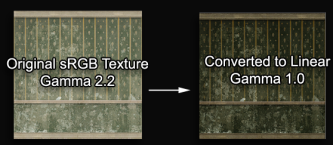

Most render engines and graphics APIs work in linear space internally.

but texture images already have gamma applied so they can be seen properly on screen (this does not apply to HDR image formats, as they are in linear space)

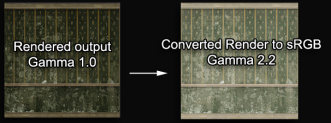

which means the resulting image (render target in DirectX 12) has elements with mixed gamma.

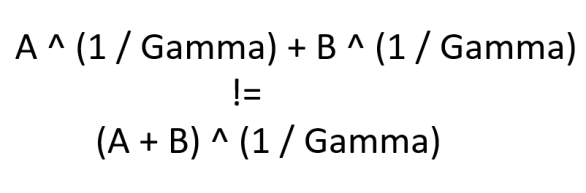

If you want to sum color values and they are gamma-corrected, you will get the wrong values

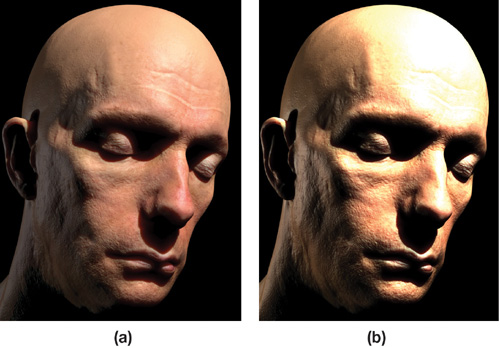

And if you do not de-gamma textures, but apply gamma on the final frame buffer (render target), you double the gamma on the textures and colors that already had it, making them look washed out.

You can detect this kind of problem by taking into account the following:

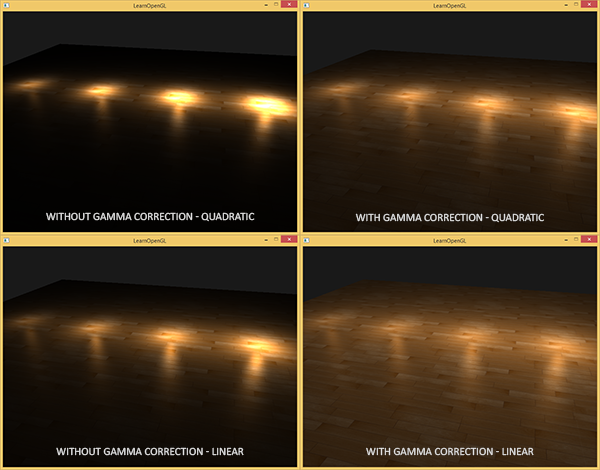

- Texture filtering assumes linear responses when averaging color values, so smaller textures (distant ones) will appear noticeably darker than larger ones.

- If you are compensating for the image quality by adding more lights and shader tricks

- If you see color tones that change when a non-linear input is brightened or darkened by lighting.

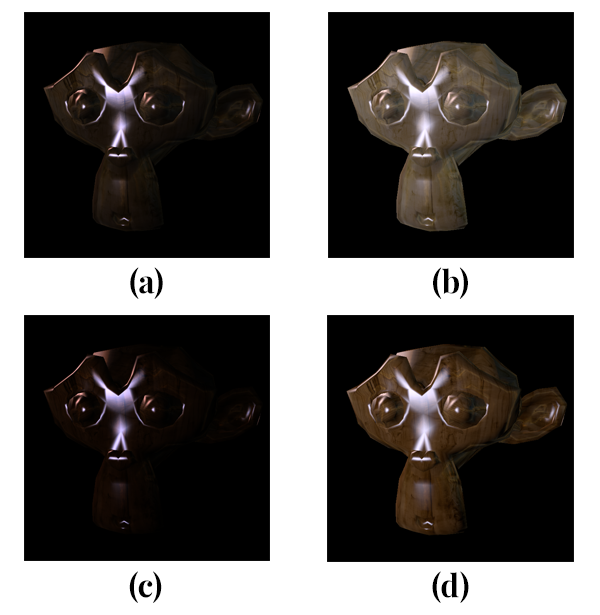

Gamma-corrected vs. Gamma-uncorrected examples

In this section, we will see some examples of how gamma-correctness affects our final image

How can we solve these problems?

- Load image formats that are in gamma 2.2 or sRGB space. DirectX or OpenGL read texture data through a shader sampler. If that texture is in sRGB color space, it is converted to linear space, that is the space we need.

- Avoid the previous tip with textures whose pixels do not represent color data like normal maps, displacement maps, light maps, etc. Otherwise, you will be modifying its data incorrectly, and you will get the wrong results.

- Use sRGB format for your final render target that will be shown on the screen. When you write to an sRGB buffer, data is converted from linear space to sRGB space.

- Avoid the previous tip with your intermediate buffers or post-processing buffers. Otherwise, you will get the wrong results.

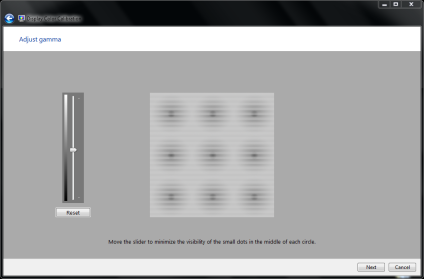

- Assume the user is using an uncalibrated and uncorrected monitor. Show a dialog for manual gamma calibration, for example.

Conclusion

Proper gamma correction is probably the easiest, most inexpensive, and most widely applicable technique for improving image quality in real-time applications. So instead of ignoring it, and using light parameters tweaks, light addition, shader tricks, and other hacks, you should implement a gamma-corrected pipeline.

References

http://gamedevelopment.tutsplus.com/articles/gamma-correction-and-why-it-matters–gamedev-14466

https://maddieman.wordpress.com/2009/06/23/gamma-correction-and-linear-colour-space-simplified/

http://artbyplunkett.com/Unreal/gamma.html

https://gamedevdaily.io/the-srgb-learning-curve-773b7f68cf7a#.pzzsyeyfp

http://www.gamasutra.com/blogs/DavidRosen/20100204/86576/GammaCorrect_Lighting.php

Great info Nicolas. To consider adding to your guide:-

The majority of Windows desktops I’ve seen all over the world in all environments are installed using default “Generic PnP monitor”.

I’d suggest (if you have admin access) install correct drivers for monitor first, then do gamma correction. You will find monitor drivers by looking at the sticker on the monitor for exact model number, then Googling of course.

Of course most techs do not anticipate this as many times they are just supplied with the box (PC) to install Windows on and/or do it remotely, and never see the monitor.

LikeLiked by 1 person

Thanks James. I agree with you. A good guideline is to calibrate your monitors.

LikeLike